Assignment 5: Egg-eater: Heap Allocation

Due: Fri 11/11 at 5pm

git clone

In this assignment you’ll extend to implement arrays and closures, which are sort like eggs lain in the heap, if you don’t think about it too much...

1 Language and Requirements

Egg-eater starts with the same semantics as Diamondback, and adds support for heap-allocated values: arrays and closures. The features are:

array expressions: creating values, accessing components, and mutating components

sequencing of expressions with

e1; e2closures: functions can be used as first-class values

lambda: non-recursive function values can constructed using lambda notation

lambda x,...: e end

The runtime system must add support for

Allocating array and closure values on the heap

Printing array and closure values

Comparing values for structural equality

Read through the whole assignment below carefully, then take note of the recommended TODO list at the bottom for a suggested order to tackle these pieces.

2 Syntax Additions and Semantics

Egg-eater adds two new kinds of heap-allocated values: arrays and closures.

For arrays, the new syntactic forms are array expressions,

along with accessor expressions for getting or setting the contents of

arrays, a unary primitive for checking if a value is an array, and a

unary primitive for getting the length of an array. Array expressions

are a series of zero or more comma-separated expressions

enclosed in (square) brackets. An array access expression is one

expression followed by another enclosed in square brakcets, which

expresses which field to be accessed. isarray is a

primitive (like isnum and isbool) that checks

if a value is an array. Finally, length is a primitive

that produces the length of an array.

For closures we change add a new syntactic forms lambda

x1,...: e end for anonymous function values, a unary primitive

isfun for checking if a value is a function and we now

allow for the function position in a call expression to be an

arbitrary value, not just an identifier.

‹expr› ... ‹array› ‹expr› [ ‹expr› ] ‹expr› [ ‹expr› ] := ‹expr› ‹expr› ; ‹expr› isarray ( ‹expr› ) length ( ‹expr› ) ‹expr› ( ) ‹expr› ( ‹exprs› ) lambda : ‹expr› end lambda ‹params› : ‹expr› end ‹params› IDENTIFIER IDENTIFIER , ‹params› ‹exprs› ‹expr› ‹expr› , ‹exprs› ‹array› [ ] [ ‹exprs› ]

For example, we can create three arrays and access their fields:

let unit = [] in

let one = [1] in

let three = [3, 4, 5] in

three[0]An array-set expression evaluates both arguments, updates the array at the appropriate index, and returns the entire tuple value as its result. We can therefore chain array-set expressions together, and write

let three = [0, 0, 0] in

((three[0] := 1)[1] := 2)[2] := 3

let pair = [0, 0] in

pair[0] := (three[1] := 10)three will be [1,10,3] and pair will be [three, 0]In the Exp datatype, these are represented as:

enum Exp<Ann> {

...

Array(Vec<Exp<Ann>>, Ann),

ArraySet {

array: Box<Exp<Ann>>,

index: Box<Exp<Ann>>,

new_value: Box<Exp<Ann>>,

ann: Ann,

},

Semicolon {

e1: Box<Exp<Ann>>,

e2: Box<Exp<Ann>>,

ann: Ann,

},

Call(Box<Exp<Ann>>, Vec<Exp<Ann>>, Ann),

Lambda {

parameters: Vec<String>,

body: Box<Exp<Ann>>,

ann: Ann

}

MakeClosure {

arity: usize,

label: String,

env: Box<Exp<Ann>>,

ann: Ann

}

}

enum Prim1 {

...

IsArray,

IsFun,

Length,

}

enum Prim2 {

...

ArrayGet,

}This includes an additional form which is purely internal to the

compiler: MakeClosure, which constructs a closure given an

arity, the name of the label and an expression constructing the

captured environment. We will desugar our Lambda and

FunDecls forms to use this.

In Sequential form, these expressions are represented as

SeqExps, with ImmExp components, and additionally we

rename the Call to CallClosure to emphasize that this is

a combination of unpacking the closure with our previous notion of

function call:

enum SeqExp<Ann> {

...

AssertSize(ImmExp, usize, Ann),

Array(Vec<ImmExp>, Ann),

ArraySet {

array: ImmExp,

index: ImmExp,

new_value: ImmExp,

ann: Ann,

},

MakeClosure {

arity: usize,

label: String,

env: ImmExp,

ann: Ann,

},

CallClosure {

fun: ImmExp,

args: Vec<ImmExp>,

ann: Ann,

},

}Note that these expressions are all SeqExps, and not

ImmExps – the allocation of an array or closure counts as a

“step” of execution, and so they are not themselves already values.

3 Semantics and Representation of Arrays

3.1 Array Heap Layout

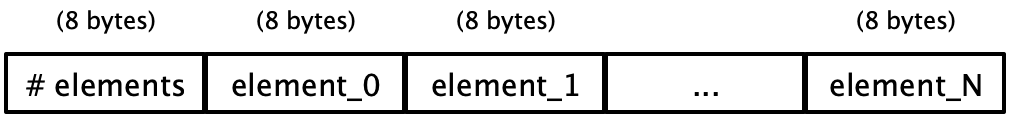

Array expressions should evaluate their sub-expressions in order from left to right, and store the resulting values on the heap. We discussed several possible representations in class for laying out arrays on the heap; the one we recommend you use for this assignment is:

That is, one word is used to store the count of the number of elements in the array, and the subsequent words are used to store the values themselves. Note that the count is an actual integer; it is not an encoded Egg-eater integer value.

An array value is stored in variables as the address of the

first word in the array’s memory, but with an additional 1 added to the value

to act as a tag. So, for example, if the start address of the above memory

were 0x0adadad0, the array value would be 0x0adadad1. With this change, we

extend the set of tag bits to the following:

Numbers:

0in the least significant bitBooleans:

111in the three least significant bitsArrays:

001in the three least significant bitsClosures:

011in the three least significant bits

Visualized differently, the value layout is:

Bit pattern |

| Value type |

|

| Number |

|

| True |

|

| False |

|

| Array |

|

| Closure |

Where W is a “wildcard” 16-bit nibble and b is a “wildcard” bit.

3.2 Accessing Array Contents

In an array access expression, like

let t = [6, 7, 8, 9] in t[1]The behavior should be:

Evaluate the expression in array position (before the brackets), then the index expression (the one inside the brackets).

Check that the array position’s value is actually an array, and signal an error containing

"indexed into non-array"if not.Check that the indexing expression is a number. Signal an error containing

"index not a number"if not.Check that the index number is a valid index for the array value —

that is, it is between 0and the stored number of elements in the array minus one. Signal an error containing"index out of bounds"Evaluate to the array element at the specified index.

These same error messages apply also to setting the value of an

array. Additionally, if at runtime, length is performed on

a non array value, the error message should include "length

called with non-array" analogous to similar previous error messages.

You can do this with just rax, but it causes some

pain. Feel free to use as scratch registers r13 and r14 as

needed (for example saving the index in r14 and using rax

to store the address of the tuple). This can save a number of

instructions. Note that we will generate code that doesn’t need to

use r13 or r14 beyond the extent of this one expression,

so there is no need to worry about saving or restoring the old value

from r14 except in the compilation of the main expression.

You also may want to use an extended syntax for mov in order to combine these

values for lookup. For example, this kind of arithmetic is allowed inside

mov instructions:

mov rax, [rax + r14 * 8 + 8]This would access the memory at the location of rax, offset by

the value of r14 * 8 + 8. So if the value in r14 were,

say 2, this may be part of a scheme for accessing the second

element of a tuple. To aid in this we have generalized the

MemRef type to allow for these dynamically computed offsets:

struct MemRef {

reg: Reg,

offset: Offset,

}

enum Offset {

Constant(i32),

Computed { // reg * factor + constant

reg: Reg,

factor: i32,

constant: i32,

},

}Neither R14 nor anything beyond the typical

Offset::Constant is required to make this work, but you

may find it simpler to compile using these.

3.3 General Heap Layout

The register r15 has been designated as the heap pointer (note

that if you are using R15 for a work register you should either

change that or use a different register for the heap pointer). To

initialize the heap you should either use the technique from

Lecture 10: Tuples and Memory Allocation where we construct an array in Rust and pass it in as

an argument to start_here or you can construct an array in the

data section of your assembly file. For instance if you start the file with

section .data

HEAP: times 1024 dq 0Then the label HEAP will be resolved to an address pointing to

an array of 1024 QWORD values initialized to all 0. This is 8

kilobytes which should be enough to pass the autograder tests.

Whichever approach you take, it is up to your code to initialize the

heap pointer R15 to point to the beginning of this space and

ensure that the value of R15 is always the address of the next

block of free space (in increasing address order) in the provided

block of memory.

3.4 Interaction with Existing Features

Any time we add a new feature to a language, we need to consider its interactions with all the existing features.

We’ll take them one at a time.

Equality: The arithmetic expressions should continue to only allow numbers, and signal errors on tuple values. There is one binary operator that doesn’t check its types, however:

==. We need to decide what the behavior of==is on tuple values and closure values. Note that we have a (rather important) choice here. Clearly, this program should evaluate totrue:let t = [4, 5] in t == tHowever, we need to decide if

[4,5] == [4,5]should evaluate to

trueorfalse. For this assignment we will consider these arguments to be not equal because they are not implemented as the same value on the heap, so if one was mutated, the other would not be. That is, we will use pointer equality rather than structural equality.We will similarly consider two closures to be equal only when they are the same underlying pointer in memory.

Print: The behavior of the unary operators is straightforward, with the exception that we need to implement

printfor tuples and closures.Closures should really be opaque values that you can only interact with by calling, so we will simply print

"<closure>"when we encounter one.For arrays, we could just print the address, but that would be somewhat unsatisfying. Instead, we should recursively print the tuple contents, so that the program

print([4, [true, 3]])actually prints the string

"[4, [true, 3]]". This will require some additional work with pointers instub.rs. A useful hint is to create a recursive helper function forsprint_snake_valthat traverses the nested structure of tuples and prints single values. To help you working with raw bytes in Rust, we have provided a functionload_snake_arraythat takes a pointer to an array in the heap and "parses" it into a struct consisting of a size and a pointer to the first element of the array. You can then access the other elements of the array by using the.add(n)method on pointers. You will need to useunsafecode to implement this, of course.The interesting case is when there is a cyclic heap value. For instance the following program

let pair = [ 0 , 1 ] in pair[1] := pair; paircreates a cyclic linked list in memory, and naively traversing it would cause an infinite recursion. A small number of test cases will check for correctly implementing printing of cyclic values like this, so you should likely put a bound on your initial print function or the autograder might take very long to run.

In the presence of a cyclic value you should print

"<loop>"where the first loop occurs. For instance the above cyclic list should be printed as"[0, <loop>]".

3.5 Closures and Recursive Function Definitions

First, we need to consider how static and dynamic error messages are affected by making functions first-class values.

We no longer need a separate

UndefinedFunctionerror, as functions will be in the same namespace as other values and so we will just use the generalUnboundVariableerror.We no longer need a

FunctionUsedAsValueerror, as functions can be used as values!We will also no longer have a static

ValueUsedAsFunctionerror, but we need instead to have a dynamic error when a number, boolean or array is used as the function in a call. In this case, your code should error with a message that includes the string "called a non-function".Similarly, we will no longer have a static

FunctionCalledWrongAritymessage, as this check will be delayed to runtime. When a function is called with the wrong number of arguments at runtime, your code should raise an error which includes the string "wrong number of arguments".

To implement closures, we will adapt our lambda lifting phase to

desugar the Lambda and FunDefs forms to explicit closure

construction MakeClosure. This is not as big of a change as it

might at first appear: your lambda lifting code is already determining

what the captured environment is, but now instead of adding all of

those variables as extra arguments individually, we will put them into

an array and pass that as a single extra argument, and produce a

closure value. Note that unlike in diamondback, lambda lifting no

longer has to change the Call instructions to pass additional

variables, as the Call instruction will instead be compiled to

pass the environment that is stored in the closure.

See Lecture 12: First-class Functions for more details on the code generation.

As discussed in class, recursive function definitions can be handled in several different ways:

You can construct a single environment and make closures with all of the different variables

You can use Landin’s knot to desugar the recursion into a sequence of let-bindings of lambdas, where you store the functions in a mutable array

Note: While all of the methods presented in class will work in the presence of a garbage collector, since we do not have one for our language, some of the implementations (the one presented in class on 10/24 and the Y combinator) will eventually consume arbitrary memory. So please use either Landin’s knot as presented in class on 10/26 or see the corrected version of the technique shown in 10/24 in the updated notes Lecture 12: First-class Functions.

Any of these will work, and you are free to choose what you are most comfortable with implementing. The first is the most straightforward to fit in the existing pipeline, while the latter two would probably benefit from being implemented by a new desugaring pass. The benefit of the latter two approaches is that if you get the desugaring correct, then you can then rely on your correct implementation of lambdas and arrays to ensure the mutually recursive functions are implemented correctly.

4 Recommended TODO List

Try implementing an interesting test cases using lists, binary trees or another interesting recursive datatypes in Egg-eater. Include one of these examples as

interesting.eggin theexamples/directory.Add your existing code, adding

panic!for the new cases (arrays, lambda) and changed cases (function calls).Write tests for the array features.

Extend

check_progto handle arrays, and adapt it to correctly handle the changed error messages relating to functions.Extend your passes (

uniquify, lambda_lift,sequentialize) to support arrays.Implement code generation for arrays.

Implement printing arrays in Rust.

Write tests for closures/lambdas.

Extend

lambda_liftto translatelambdas to uses ofmake_closure.Implement code generation for

make_closureandcall_closure.Extend your implementation to handle recursive closures. We discussed several methods for this in class (directly, Landin’s knot and the Y combinator) and you are free to use whichever you wish.

5 List of Deliverables

your

compile.rsthe other src/*.rs files in the starter code

any additional modules you saw fit to write

your

runtime/stub.rsthe Cargo.toml

integration tests (

tests/examples.rs)your test input programs (

examples/*.eggfiles), specifically at least oneexamples/interesting.egg

Again, please ensure cargo builds your code properly.

The autograder will give you an automatic 0 if they cannot compile your code!

6 Grading Standards

For this assignment, you will be graded on

Whether your code implements the specification (functional correctness),

Whether you include an interesting

interesting.egg. We’ll have a low bar for interestingness but try to have fun with it!

7 Submission

Wait! Please read the assignment again and verify that you have not forgotten anything!

Please submit your homework to gradescope by the above deadline.